*The Pragmatic Summit, San Francisco — February 2026*

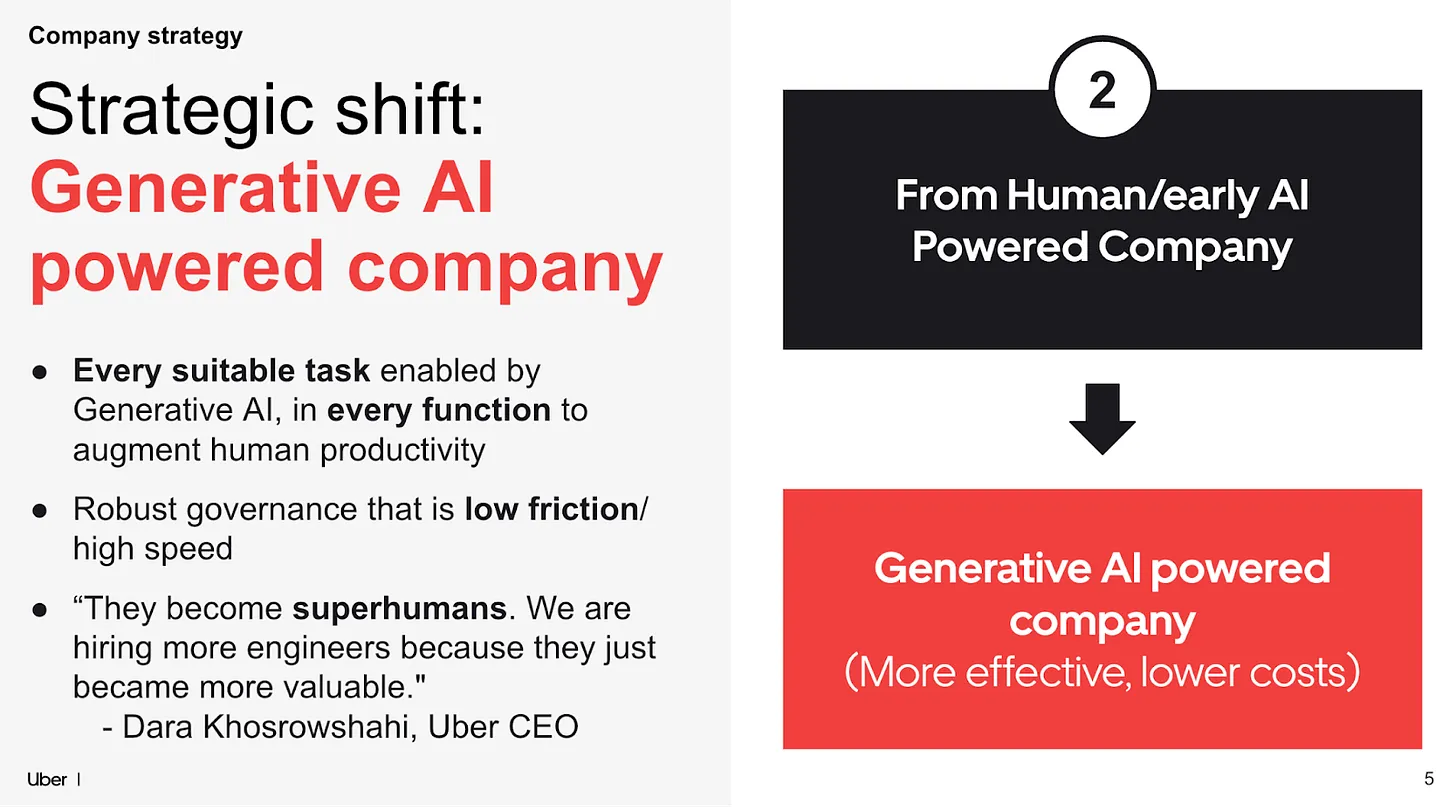

Uber is one of the most instructive AI case studies in enterprise technology right now — not because everything went perfectly, but because they're honest about what it actually costs.

At The Pragmatic Summit in San Francisco this month, Uber's engineering leadership shared a detailed look at how they've rolled out AI tools across nearly 3,000 people in their tech function. The results are genuinely impressive. 92% of Uber's developers now use AI agents monthly. 31% of code is AI-authored. 11% of pull requests are opened by agents entirely.

That's not a pilot programme. That's a company that has structurally changed how software gets built.

But buried at the end of the headline numbers is the figure that should get every CFO's attention: **AI-related costs are up 6x since 2024**, and token cost optimization is now a dedicated priority.

Six times. In roughly twelve months.

---

## How You Get to 6x Without Noticing

The mechanics are worth understanding, because Uber isn't unusual here — they're just further along than most.

The shift from single-agent to multi-agent workflows is where costs stop being predictable. Uber's engineering leads describe how developers naturally fall into a pattern of running multiple parallel agents simultaneously — one task kicks off, there's a wait, so another agent gets started. Then another. The behaviour is rational at the individual level. At the organisational level, it's a multiplication problem that compounds every time you hire an engineer or roll out a new tool.

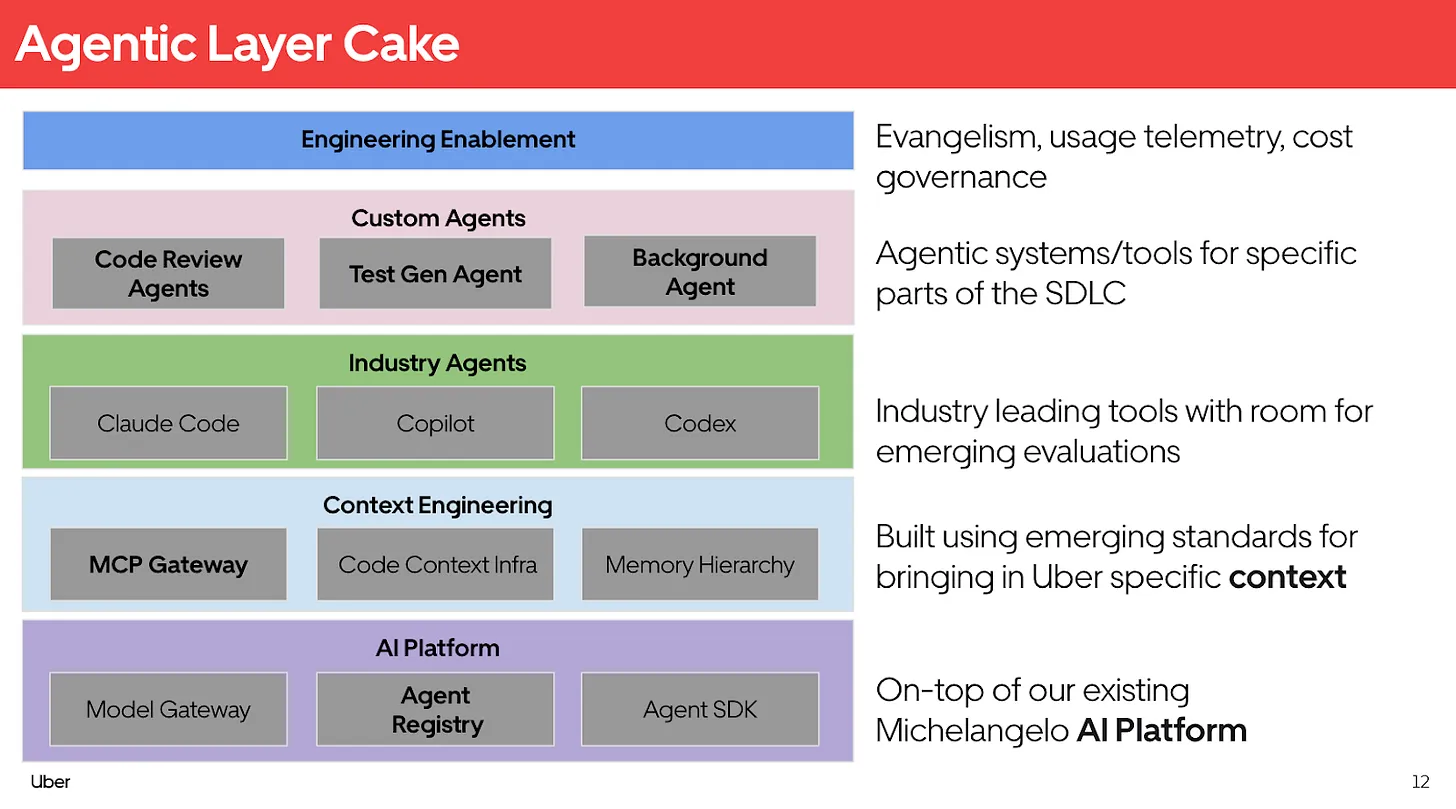

*Uber's "Agentic Layer Cake" — cost governance sits at the top Engineering Enablement layer*

Uber explicitly lists "controlling costs" as a function of their engineering enablement layer — the same layer that handles tool education and efficiency measurement. That's telling. Cost governance didn't emerge from the finance team. It emerged from engineering, because that's where the spend was visible.

That's the structural problem most enterprises haven't solved yet.

---

## The Finance Team's Blind Spot

In most organisations, AI API spend hits a cloud bill. It gets aggregated under infrastructure or IT. It might carry a label like "OpenAI" or "AWS Bedrock" next to a number that's growing faster than any other line item on the page.

What it doesn't carry is attribution. Which team? Which use case? Which agent is burning the most tokens on the least valuable work? Is that 6x cost increase generating 6x the output — or 6x the background noise from agents nobody is reviewing?

Uber is now asking those questions at the engineering layer. Most companies don't have the platform maturity to ask them at all.

And in the meantime, the CFO signs off on a quarterly cloud bill that includes a number they can't decompose, forecast, or defend to the board.

---

## What Governing This Actually Looks Like

Token cost optimization isn't a finance problem by accident. It's a finance problem because tokens are a unit of consumption that behaves like headcount or cloud compute — it scales with usage, it responds to policy, and it has a direct relationship to business output.

The companies that get ahead of this won't wait for engineering to build internal cost dashboards. They'll treat AI spend as a budget category with the same governance applied to any other material operating expense: visibility by cost centre, forecasting by use case, accountability by owner.

Uber is learning this the hard way — with sophisticated internal tooling and a dedicated focus on optimisation. Most enterprises will learn it the same way, just with less infrastructure to fall back on.

The question for finance leaders isn't whether AI costs will become a governance challenge. They already are. The question is whether you'll have visibility before or after the next quarterly surprise.

Taiken is building financial governance tooling for enterprise AI spend. If you're a CFO or VP of Finance managing material AI budgets, apply for design partnership.